Your hiring process was built for a world that no longer exists

Three years ago, a background check told you something reliable. A CV reflected effort. A reference letter came from the person whose name was on it. An interview gave you a reasonable read on whether someone could do the job.

None of that has disappeared. But all of it has become less certain.

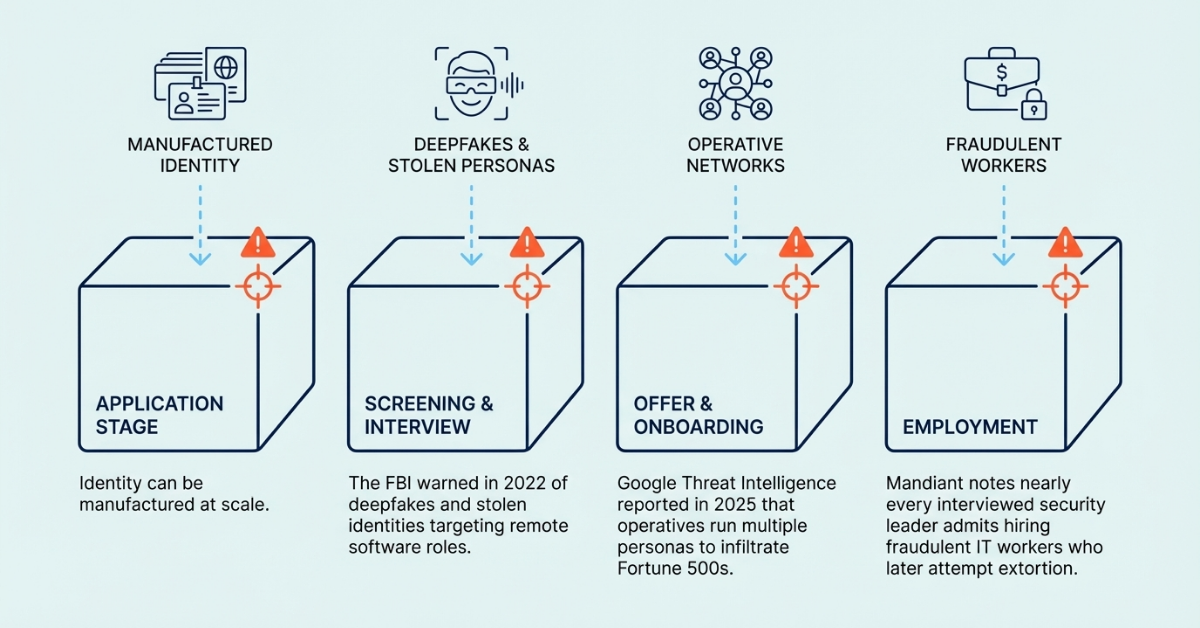

AI is changing what counts as credible evidence in hiring and workforce management. Candidates are using ChatGPT to generate tailored employment histories that map precisely to job descriptions. AI image tools produce convincing qualification certificates. Real-time coaching tools feed suggested answers during video interviews. In verified cases, the person on the video call wasn't the person who turned up on day one. The FBI's Internet Crime Complaint Center flagged this pattern back in 2022, warning of deepfakes and stolen personal information being used to apply for remote roles, particularly in IT and software development where the successful applicant would have access to sensitive company data.

.png)

It's got worse since. Google's Threat Intelligence Group reported in 2025 that North Korean operatives have infiltrated hundreds of Fortune 500 companies by fabricating identities, running multiple personas simultaneously, and using AI-generated profiles to pass hiring checks. Mandiant's CTO told the RSAC 2025 Conference that nearly every CISO he'd spoken to had admitted hiring at least one fraudulent IT worker. When those operatives were discovered and terminated, some extorted their former employers with stolen proprietary data and source code.

These aren't isolated incidents. They're what happens when identity can be manufactured at scale and verification systems haven't adapted. And most organisations are still operating as if the old model holds. Run the checks. Review the documents. Make the hire. Move on.

What AI is actually changing

AI is compressing the distance between authentic and fabricated. A polished CV used to be a reasonable proxy for competence, or at least for effort. Now it's a proxy for access to a chatbot. A clean reference letter used to mean someone thought highly of you. Now it might mean someone prompted a language model with your LinkedIn URL.

Gartner's Q4 2024 survey of over 3,000 candidates found that 39% used AI during their most recent application process, primarily to generate CV text, cover letters, and assessment answers. The problem isn't that candidates are doing this. Most are, and it would be odd to expect otherwise. The problem is that employers are still reading these signals as if they carry the same weight they did five years ago.

Meanwhile, the OECD's 2025 employer survey of more than 6,000 firms across six countries found that algorithmic management tools are already widespread: 90% adoption in the United States, 79% across Europe. Managers reported improved decision quality, but two-thirds also flagged concerns about unclear accountability and an inability to follow the logic behind algorithmic decisions.

AI is being adopted quickly. The governance structures around it aren't keeping pace. And the consequences of that gap are already materialising.

An organisation that hires someone using fabricated credentials faces more than a bad hire. It faces potential data exfiltration, intellectual property theft, regulatory penalties, and reputational damage it may not discover for months. An organisation that can't explain how its AI screening tool made a decision faces discrimination claims it can't defend. An organisation that verified someone at onboarding and never looked again faces liability for what changed in between.

The question isn't whether these risks are real. It's whether your current systems are designed to catch them.

Authenticity: when proof stops proving anything

The most immediate trust problem is authenticity. AI makes it easier to fabricate credentials, manufacture identities, and produce materials that look indistinguishable from the real thing. Synthetic qualifications, AI-written references attributed to real people, LinkedIn profiles built to pass a visual check. The FBI's 2022 alert described applicants whose lip movements didn't match their audio during video interviews. Google's 2025 reporting described operatives maintaining a dozen fake personas across multiple countries, with fabricated references from other personas they controlled. Gartner predicts that by 2028, one in four candidate profiles worldwide will be fake.

If your hiring process still treats a clean CV and a matching LinkedIn profile as sufficient evidence, you're relying on signals that can now be generated in minutes. Traditional verification is necessary, but it's no longer sufficient on its own. The question employers need to ask isn't "did this person provide the right documents?" It's "what would we miss if every document in front of us looked perfect?"

We examine this in detail in The CV on your desk was probably written by AI. So how do you trust who you're hiring?, which looks at how AI is degrading hiring signals and what it means for proof, judgment, and candidate assessment.

{{the-cv-on-your-desk-was-probably-written-by-ai-so-how-do-you-trust-who-youre-hiring="/components"}}

Fairness: trust runs in both directions

Most of the AI-and-hiring conversation focuses on what employers need to worry about. But candidates are worried too, and their behaviour is already shifting in ways that affect hiring outcomes.

A Q1 2025 Gartner survey of nearly 3,000 job seekers found that only 26% trusted AI to evaluate them fairly. A quarter said they trust employers less when AI is involved. Offer acceptance rates have dropped from 74% in mid-2023 to 51% in mid-2025. That's not just a sentiment shift. It's a measurable commercial problem: longer time-to-fill, higher cost-per-hire, and the best candidates selecting out before you reach the offer stage.

Academic research supports the pattern. Studies published in the Journal of Business Research (2024) and related work from 2025 show that lower trust in AI-led evaluation reduces job acceptance intentions, and that perceptions of fairness depend on whether the process feels explainable and credible. Disclosing that AI is involved, without explaining how it's used, can actually make trust worse.

Regulators are paying attention. The EU AI Act classifies recruitment AI as high-risk, with transparency, human oversight, and bias auditing obligations enforceable from August 2026. In Australia, a parliamentary committee recommended in February 2025 that all AI used for employment purposes be classified as high-risk, and Privacy Act reforms will require disclosure of automated decision-making from December 2026. Singapore's Model AI Governance Framework and MAS guidance on AI risk management are voluntary, but they set expectations that are increasingly difficult to ignore. The direction is the same everywhere: if you're using AI in workforce decisions, you need to be able to show your working.

If your organisation can't explain how a hiring decision was made, or which criteria were weighted, or where automation played a role, you're exposed to regulatory action, discrimination claims, and candidate attrition simultaneously.

If you can't explain how you made that hire, you're already exposed examines the legal, regulatory, and governance dimensions of this problem.

{{if-you-cant-explain-how-you-made-that-hire-youre-already-exposed="/components"}}

Continuity: trust doesn't freeze at onboarding

A clean background check tells you what was true at one point in time. It says nothing about what's changed since.

.png)

This has always been a limitation, but it's widening. Workforces are more distributed. Tenures are shorter. Contractor and contingent labour models mean people move in and out of access and responsibility faster than static controls can track. The ILO's November 2025 working paper on AI in human resource management highlighted a structural problem: organisations tend to equate quantification with objectivity, and systems built on fixed assumptions break when the environment becomes fluid.

Google's Threat Intelligence findings illustrate what that looks like in practice. North Korean operatives didn't just get hired. They maintained access, moved laterally through systems, and extorted former employers with stolen data after being terminated. One operative ran at least 12 personas across Europe and the US simultaneously. The risk didn't begin at hiring. It compounded over time, undetected.

If your organisation verified someone three years ago and hasn't revisited that assessment since, you're carrying risk you can't quantify. Credentials decay. Circumstances change. Roles expand. Access grows. And in a workforce shaped by AI, remote work, and faster-moving threats, the gap between what you checked and what's actually true gets wider every month.

You verified them three years ago. What's changed since? makes the case that static verification is increasingly out of step with how work operates, and that trust needs to be revisited over time.

{{you-verified-them-three-years-ago-whats-changed-since="/components"}}

Accountability: the question that matters most

The OECD's survey found that managers themselves struggle to follow the logic of algorithmic decisions, even as they report that those decisions feel better. The ILO's research showed how flawed objectives, biased training data, and opaque programming can undermine the very outcomes AI is supposed to improve. These aren't theoretical risks. They're the operating conditions most organisations are already in.

The UK government's DSIT published guidance in March 2024 on responsible AI in recruitment, calling for assurance mechanisms across the full procurement and deployment lifecycle: governance frameworks, impact assessments, bias audits, performance testing, and user feedback systems. The EU, Australia, and Singapore are all moving in similar directions with varying degrees of binding force. The timeline is tightening.

.png)

An organisation that automates workforce decisions without the ability to explain them isn't just creating regulatory risk. It's creating a system that can't learn from its own failures, can't be audited with confidence, and can't withstand scrutiny from regulators, candidates, or its own board. When something goes wrong, and in a system you can't fully explain, it's a question of when, the damage is determined by whether you can demonstrate what you knew, what you did, and what you'd do differently.

The future belongs to accountable systems, not AI for AI's sake argues that the defining line is between systems that can prove how they work and systems that can't.

{{the-future-belongs-to-accountable-systems-not-ai-for-ais-sake="/components"}}

Trust as infrastructure

These four problems — authenticity, fairness, continuity, accountability — aren't separate issues. They're connected pressure points in a system that most organisations haven't yet redesigned for what AI is making possible.

.png)

The old model treated trust as a series of checkpoints: verify at hiring, comply with regulations, respond when something goes wrong. That model was built for a workforce that moved slowly and a threat environment that was mostly analogue. Neither condition still applies.

If you're reading this and recognising gaps in your own process, that's the point. The question isn't whether AI is relevant to your workforce. It already is. The question is whether your trust systems reflect that, or whether you're relying on a model that was designed for a different era and hoping it holds.

What replaces it isn't a single tool or a better background check. It's an approach that treats trust as something engineered into how you hire, monitor, govern, and respond, continuously rather than episodically. The organisations that get this right won't just be better protected. They'll be the ones that candidates, regulators, and boards actually believe.

{{general-cta="/components"}}

FAQs

This depends on the industry and type of role you are recruiting for. To determine whether you need reference checks, identity checks, bankruptcy checks, civil background checks, credit checks for employment or any of the other background checks we offer, chat to our team of dedicated account managers.

Many industries have compliance-related employment check requirements. And even if your industry doesn’t, remember that your staff have access to assets and data that must be protected. When you employ a new staff member you need to be certain that they have the best interests of your business at heart. Carrying out comprehensive background checking helps mitigate risk and ensures a safer hiring decision.

Again, this depends on the type of checks you need. Simple identity checks can be carried out in as little as a few hours but a worldwide criminal background check for instance might take several weeks. A simple pre-employment check package takes around a week. Our account managers are specialists and can provide detailed information into which checks you need and how long they will take.

All Veremark checks are carried out online and digitally. This eliminates the need to collect, store and manage paper documents and information making the process faster, more efficient and ensures complete safety of candidate data and documents.

In a competitive marketplace, making the right hiring decisions is key to the success of your company. Employment background checks enables you to understand more about your candidates before making crucial decisions which can have either beneficial or catastrophic effects on your business.

Background checks not only provide useful insights into a candidate’s work history, skills and education, but they can also offer richer detail into someone’s personality and character traits. This gives you a huge advantage when considering who to hire. Background checking also ensures that candidates are legally allowed to carry out certain roles, failed criminal and credit checks could prevent them from working with vulnerable people or in a financial function.

Trusted by the world's best workplaces

APPROVED BY INDUSTRY EXPERTS

.png)

.png)

and Loved by reviewers

Transform your hiring process

Request a discovery session with one of our background screening experts today.